# -*- coding: UTF-8 -*-

import matplotlib

matplotlib.use('QtAgg')import os

# 替换为实际的 Qt 插件目录

qt_plugin_path = 'f:/cv/.venv/Lib/site-packages/PyQt5/Qt5/plugins'

os.environ['QT_PLUGIN_PATH'] = qt_plugin_pathfrom matplotlib.font_manager import FontProperties

import matplotlib.pyplot as plt

from math import log

import operatordef createDataSet():dataSet = [[0, 0, 0, 0, 'no'], [0, 0, 0, 1, 'no'],[0, 1, 0, 1, 'yes'],[0, 1, 1, 0, 'yes'],[0, 0, 0, 0, 'no'],[1, 0, 0, 0, 'no'],[1, 0, 0, 1, 'no'],[1, 1, 1, 1, 'yes'],[1, 0, 1, 2, 'yes'],[1, 0, 1, 2, 'yes'],[2, 0, 1, 2, 'yes'],[2, 0, 1, 1, 'yes'],[2, 1, 0, 1, 'yes'],[2, 1, 0, 2, 'yes'],[2, 0, 0, 0, 'no']]labels = ['F1-AGE', 'F2-WORK', 'F3-HOME', 'F4-LOAN'] return dataSet, labelsdef createTree(dataset,labels,featLabels):classList = [example[-1] for example in dataset]if classList.count(classList[0]) == len(classList):return classList[0]if len(dataset[0]) == 1:return majorityCnt(classList)bestFeat = chooseBestFeatureToSplit(dataset)bestFeatLabel = labels[bestFeat]featLabels.append(bestFeatLabel)myTree = {bestFeatLabel:{}}del labels[bestFeat]featValue = [example[bestFeat] for example in dataset]uniqueVals = set(featValue)for value in uniqueVals:sublabels = labels[:]myTree[bestFeatLabel][value] = createTree(splitDataSet(dataset,bestFeat,value),sublabels,featLabels)return myTreedef majorityCnt(classList):classCount={}for vote in classList:if vote not in classCount.keys():classCount[vote] = 0classCount[vote] += 1sortedclassCount = sorted(classCount.items(),key=operator.itemgetter(1),reverse=True)return sortedclassCount[0][0]def chooseBestFeatureToSplit(dataset):numFeatures = len(dataset[0]) - 1baseEntropy = calcShannonEnt(dataset)bestInfoGain = 0bestFeature = -1for i in range(numFeatures):featList = [example[i] for example in dataset]uniqueVals = set(featList)newEntropy = 0for val in uniqueVals:subDataSet = splitDataSet(dataset,i,val)prob = len(subDataSet)/float(len(dataset))newEntropy += prob * calcShannonEnt(subDataSet)infoGain = baseEntropy - newEntropyif (infoGain > bestInfoGain):bestInfoGain = infoGainbestFeature = i return bestFeature#FontProperties def splitDataSet(dataset,axis,val):retDataSet = []for featVec in dataset:if featVec[axis] == val:reducedFeatVec = featVec[:axis]reducedFeatVec.extend(featVec[axis+1:])retDataSet.append(reducedFeatVec)return retDataSetdef calcShannonEnt(dataset):numexamples = len(dataset)labelCounts = {}for featVec in dataset:currentlabel = featVec[-1]if currentlabel not in labelCounts.keys():labelCounts[currentlabel] = 0labelCounts[currentlabel] += 1shannonEnt = 0for key in labelCounts:prop = float(labelCounts[key])/numexamplesshannonEnt -= prop*log(prop,2)return shannonEntdef getNumLeafs(myTree):numLeafs = 0 firstStr = next(iter(myTree)) secondDict = myTree[firstStr] for key in secondDict.keys():if type(secondDict[key]).__name__=='dict':numLeafs += getNumLeafs(secondDict[key])# 修正缩进,统一为 4 个空格else:numLeafs += 1return numLeafsdef getTreeDepth(myTree):maxDepth = 0 firstStr = next(iter(myTree)) secondDict = myTree[firstStr] for key in secondDict.keys():if type(secondDict[key]).__name__ == 'dict': thisDepth = 1 + getTreeDepth(secondDict[key])else:# 当不是字典时,当前深度为 1thisDepth = 1if thisDepth > maxDepth:maxDepth = thisDepth return maxDepthdef plotNode(nodeTxt, centerPt, parentPt, nodeType):arrow_args = dict(arrowstyle="<-")font = FontProperties(fname=r"c:\windows\fonts\simsunb.ttf", size=14)# 修正缩进和重复代码createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction',xytext=centerPt, textcoords='axes fraction',va="center", ha="center", bbox=nodeType, arrowprops=arrow_args, fontproperties=font)def plotMidText(cntrPt, parentPt, txtString):xMid = (parentPt[0]-cntrPt[0])/2.0 + cntrPt[0]yMid = (parentPt[1]-cntrPt[1])/2.0 + cntrPt[1]createPlot.ax1.text(xMid, yMid, txtString, va="center", ha="center", rotation=30)# ... existing code ...def plotTree(myTree, parentPt, nodeTxt):decisionNode = dict(boxstyle="sawtooth", fc="0.8") leafNode = dict(boxstyle="round4", fc="0.8") numLeafs = getNumLeafs(myTree) depth = getTreeDepth(myTree) firstStr = next(iter(myTree)) cntrPt = (plotTree.xOff + (1.0 + float(numLeafs))/2.0/plotTree.totalW, plotTree.yOff) plotMidText(cntrPt, parentPt, nodeTxt) plotNode(firstStr, cntrPt, parentPt, decisionNode) secondDict = myTree[firstStr] plotTree.yOff = plotTree.yOff - 1.0/plotTree.totalD for key in secondDict.keys(): if type(secondDict[key]).__name__=='dict': plotTree(secondDict[key],cntrPt,str(key)) else: plotTree.xOff = plotTree.xOff + 1.0/plotTree.totalWplotNode(secondDict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))plotTree.yOff = plotTree.yOff + 1.0/plotTree.totalDdef createPlot(inTree):fig = plt.figure(1, facecolor='white') #创建figfig.clf() #清空figaxprops = dict(xticks=[], yticks=[])createPlot.ax1 = plt.subplot(111, frameon=False, **axprops) #去掉x、y轴plotTree.totalW = float(getNumLeafs(inTree)) #获取决策树叶结点数目plotTree.totalD = float(getTreeDepth(inTree)) #获取决策树层数plotTree.xOff = -0.5/plotTree.totalW; plotTree.yOff = 1.0; #x偏移plotTree(inTree, (0.5,1.0), '') #绘制决策树plt.show()if __name__ == '__main__':dataset, labels = createDataSet()featLabels = []myTree = createTree(dataset,labels,featLabels)createPlot(myTree)代码主要实现了一个决策树的创建和可视化功能。下面我将逐段对代码进行解读。

1. 导入必要的库

# -*- coding: UTF-8 -*-

import matplotlib

matplotlib.use('QtAgg')import os

# 替换为实际的 Qt 插件目录

qt_plugin_path = 'f:/cv/.venv/Lib/site-packages/PyQt5/Qt5/plugins'

os.environ['QT_PLUGIN_PATH'] = qt_plugin_pathfrom matplotlib.font_manager import FontProperties

import matplotlib.pyplot as plt

from math import log

import operator

- 设定编码为 UTF - 8。

- 导入

matplotlib库,并且指定使用QtAgg后端。 - 设置

QT_PLUGIN_PATH环境变量,以此保证Qt插件能正常加载。 - 导入其他必要的库,像

matplotlib的字体管理、绘图功能,math库的对数函数,以及operator库的操作符。

2. 创建数据集

def createDataSet():dataSet = [[0, 0, 0, 0, 'no'],[0, 0, 0, 1, 'no'],[0, 1, 0, 1, 'yes'],[0, 1, 1, 0, 'yes'],[0, 0, 0, 0, 'no'],[1, 0, 0, 0, 'no'],[1, 0, 0, 1, 'no'],[1, 1, 1, 1, 'yes'],[1, 0, 1, 2, 'yes'],[1, 0, 1, 2, 'yes'],[2, 0, 1, 2, 'yes'],[2, 0, 1, 1, 'yes'],[2, 1, 0, 1, 'yes'],[2, 1, 0, 2, 'yes'],[2, 0, 0, 0, 'no']]labels = ['F1-AGE', 'F2-WORK', 'F3-HOME', 'F4-LOAN']return dataSet, labels

createDataSet函数用于创建一个数据集,数据集由多个样本构成,每个样本包含若干特征和一个类别标签。labels列表给出了每个特征的名称。

3. 创建决策树

def createTree(dataset, labels, featLabels):classList = [example[-1] for example in dataset]if classList.count(classList[0]) == len(classList):return classList[0]if len(dataset[0]) == 1:return majorityCnt(classList)bestFeat = chooseBestFeatureToSplit(dataset)bestFeatLabel = labels[bestFeat]featLabels.append(bestFeatLabel)myTree = {bestFeatLabel: {}}del labels[bestFeat]featValue = [example[bestFeat] for example in dataset]uniqueVals = set(featValue)for value in uniqueVals:sublabels = labels[:]myTree[bestFeatLabel][value] = createTree(splitDataSet(dataset, bestFeat, value), sublabels, featLabels)return myTree

createTree函数采用递归方式构建决策树。- 若数据集中所有样本的类别标签相同,就返回该类别标签。

- 若数据集中只剩下类别标签,就调用

majorityCnt函数返回出现次数最多的类别标签。 - 调用

chooseBestFeatureToSplit函数选取最优特征,依据该特征的不同取值划分数据集,然后递归构建子树。

4. 多数表决函数

def majorityCnt(classList):classCount = {}for vote in classList:if vote not in classCount.keys():classCount[vote] = 0classCount[vote] += 1sortedclassCount = sorted(classCount.items(), key=operator.itemgetter(1), reverse=True)return sortedclassCount[0][0]

majorityCnt函数用于统计类别标签的出现次数,返回出现次数最多的类别标签。

5. 选择最优特征

def chooseBestFeatureToSplit(dataset):numFeatures = len(dataset[0]) - 1baseEntropy = calcShannonEnt(dataset)bestInfoGain = 0bestFeature = -1for i in range(numFeatures):featList = [example[i] for example in dataset]uniqueVals = set(featList)newEntropy = 0for val in uniqueVals:subDataSet = splitDataSet(dataset, i, val)prob = len(subDataSet) / float(len(dataset))newEntropy += prob * calcShannonEnt(subDataSet)infoGain = baseEntropy - newEntropyif (infoGain > bestInfoGain):bestInfoGain = infoGainbestFeature = ireturn bestFeature

chooseBestFeatureToSplit函数借助计算信息增益来选择最优特征。- 先计算数据集的基础熵

baseEntropy。 - 针对每个特征,计算其划分后的新熵

newEntropy,进而得到信息增益infoGain。 - 返回信息增益最大的特征的索引。

6. 划分数据集

python

def splitDataSet(dataset, axis, val):retDataSet = []for featVec in dataset:if featVec[axis] == val:reducedFeatVec = featVec[:axis]reducedFeatVec.extend(featVec[axis + 1:])retDataSet.append(reducedFeatVec)return retDataSet

splitDataSet函数依据指定特征和特征值划分数据集,返回划分后的子集。

7. 计算香农熵

python

def calcShannonEnt(dataset):numexamples = len(dataset)labelCounts = {}for featVec in dataset:currentlabel = featVec[-1]if currentlabel not in labelCounts.keys():labelCounts[currentlabel] = 0labelCounts[currentlabel] += 1shannonEnt = 0for key in labelCounts:prop = float(labelCounts[key]) / numexamplesshannonEnt -= prop * log(prop, 2)return shannonEnt

calcShannonEnt函数用于计算数据集的香农熵,反映数据集的混乱程度。

8. 计算决策树的叶子节点数和深度

python

def getNumLeafs(myTree):numLeafs = 0firstStr = next(iter(myTree))secondDict = myTree[firstStr]for key in secondDict.keys():if type(secondDict[key]).__name__ == 'dict':numLeafs += getNumLeafs(secondDict[key])else:numLeafs += 1return numLeafsdef getTreeDepth(myTree):maxDepth = 0firstStr = next(iter(myTree))secondDict = myTree[firstStr]for key in secondDict.keys():if type(secondDict[key]).__name__ == 'dict':thisDepth = 1 + getTreeDepth(secondDict[key])else:thisDepth = 1if thisDepth > maxDepth:maxDepth = thisDepthreturn maxDepth

getNumLeafs函数递归计算决策树的叶子节点数。getTreeDepth函数递归计算决策树的深度。

9. 绘制节点和文本

python

def plotNode(nodeTxt, centerPt, parentPt, nodeType):arrow_args = dict(arrowstyle="<-")font = FontProperties(fname=r"c:\windows\fonts\simsunb.ttf", size=14)createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction',xytext=centerPt, textcoords='axes fraction',va="center", ha="center", bbox=nodeType, arrowprops=arrow_args, fontproperties=font)def plotMidText(cntrPt, parentPt, txtString):xMid = (parentPt[0] - cntrPt[0]) / 2.0 + cntrPt[0]yMid = (parentPt[1] - cntrPt[1]) / 2.0 + cntrPt[1]createPlot.ax1.text(xMid, yMid, txtString, va="center", ha="center", rotation=30)

plotNode函数用于绘制决策树的节点。plotMidText函数用于在节点间绘制文本。

10. 绘制决策树

python

def plotTree(myTree, parentPt, nodeTxt):decisionNode = dict(boxstyle="sawtooth", fc="0.8")leafNode = dict(boxstyle="round4", fc="0.8")numLeafs = getNumLeafs(myTree)depth = getTreeDepth(myTree)firstStr = next(iter(myTree))cntrPt = (plotTree.xOff + (1.0 + float(numLeafs)) / 2.0 / plotTree.totalW, plotTree.yOff)plotMidText(cntrPt, parentPt, nodeTxt)plotNode(firstStr, cntrPt, parentPt, decisionNode)secondDict = myTree[firstStr]plotTree.yOff = plotTree.yOff - 1.0 / plotTree.totalDfor key in secondDict.keys():if type(secondDict[key]).__name__ == 'dict':plotTree(secondDict[key], cntrPt, str(key))else:plotTree.xOff = plotTree.xOff + 1.0 / plotTree.totalWplotNode(secondDict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))plotTree.yOff = plotTree.yOff + 1.0 / plotTree.totalDdef createPlot(inTree):fig = plt.figure(1, facecolor='white')fig.clf()axprops = dict(xticks=[], yticks=[])createPlot.ax1 = plt.subplot(111, frameon=False, **axprops)plotTree.totalW = float(getNumLeafs(inTree))plotTree.totalD = float(getTreeDepth(inTree))plotTree.xOff = -0.5 / plotTree.totalW;plotTree.yOff = 1.0;plotTree(inTree, (0.5, 1.0), '')plt.show()

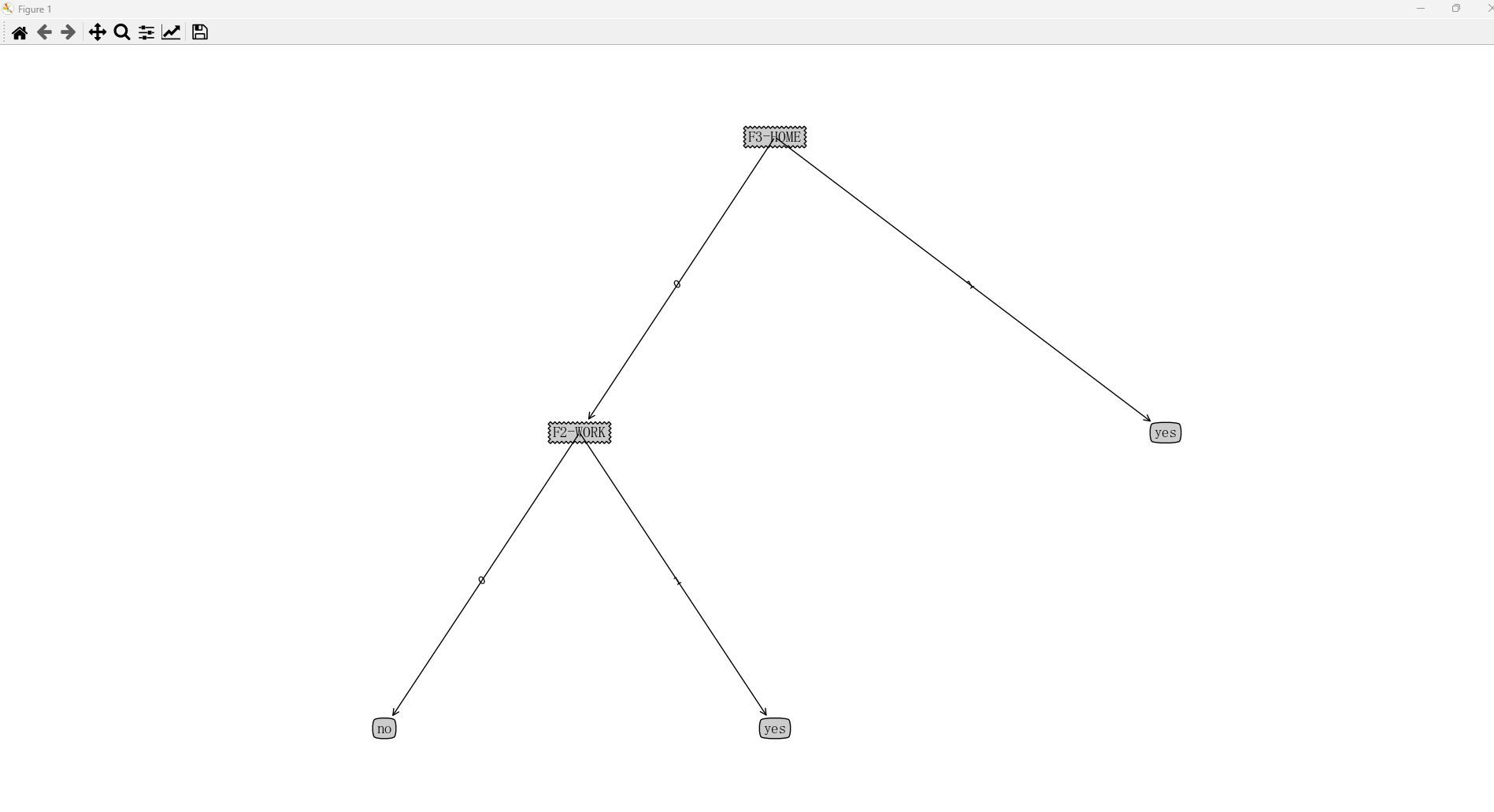

plotTree函数递归绘制决策树。createPlot函数初始化绘图环境,调用plotTree函数绘制决策树,最后显示图形。

11. 主程序

python

if __name__ == '__main__':dataset, labels = createDataSet()featLabels = []myTree = createTree(dataset, labels, featLabels)createPlot(myTree)

- 调用

createDataSet函数创建数据集。 - 调用

createTree函数构建决策树。 - 调用

createPlot函数绘制并显示决策树。

总体而言,这段代码实现了决策树的构建和可视化,运用信息增益来选择最优特征,采用递归方法构建决策树,最后用 matplotlib 库将决策树可视化。